Introduction

A legal team's AI-powered CLM (Contract Lifecycle Management) flags a contract as low-risk and routes it to signature automatically. CLM platforms are software systems that manage the entire contract process - from initial drafting and negotiation through approval workflows, execution, and post-signature obligations tracking. Days later, when the General Counsel asks why the agreement bypassed standard review, no one can explain the AI's reasoning. The model used wasn't approved for sensitive counterparty data. No audit trail exists.

The problem isn't the AI capability,it's the absence of governance around it. As CLM platforms embed more AI,drafting, risk scoring, obligation extraction, and automated approvals,the governance question has shifted from "should we use AI?" to "who controls the AI making legal decisions?" Most legal tech teams haven't answered it yet.

This article breaks down what AI governance in CLM actually requires, which controls matter most, and how to tell whether a platform's governance capabilities hold up under scrutiny.

TLDR

- AI governance in CLM controls how AI models behave at runtime, not just policies at deployment

- Legal teams face regulatory exposure under the EU AI Act, GDPR, and sector mandates when AI runs without explainability or audit trails

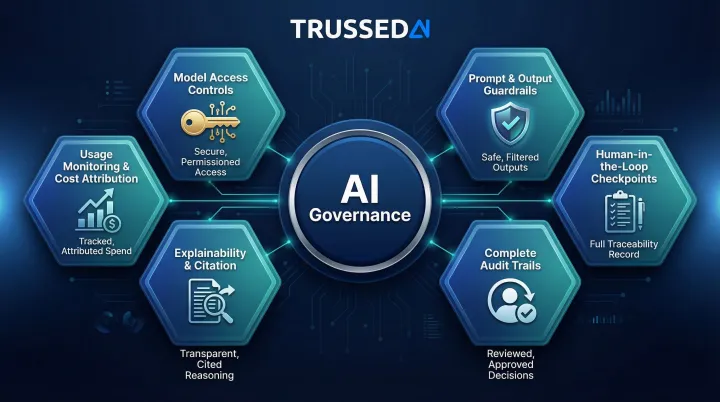

- Six essential controls: model access, prompt/output guardrails, audit trails, human checkpoints, explainability, usage monitoring

- Runtime enforcement prevents violations; design-time policies only document intent

- Evaluate platforms by asking about enforcement architecture, not compliance checkboxes

What AI Governance in CLM Actually Means

AI governance in CLM encompasses the policies, controls, and enforcement mechanisms that determine which AI models can be used, what they can do, what data they can access, and how their outputs are verified,across every stage of the contract lifecycle.

This differs from general AI governance because CLM handles legally binding agreements and sensitive counterparty data. AI errors here aren't just operational failures: they can create enforceable obligations, missed deadlines, or regulatory violations.

The exposure is growing fast. According to the Association of Corporate Counsel, 52% of in-house counsel now actively use GenAI,more than double the 23% recorded in 2024,with 82% identifying contract drafting as a primary application. That scale of adoption makes governance gaps a legal liability, not just an IT problem.

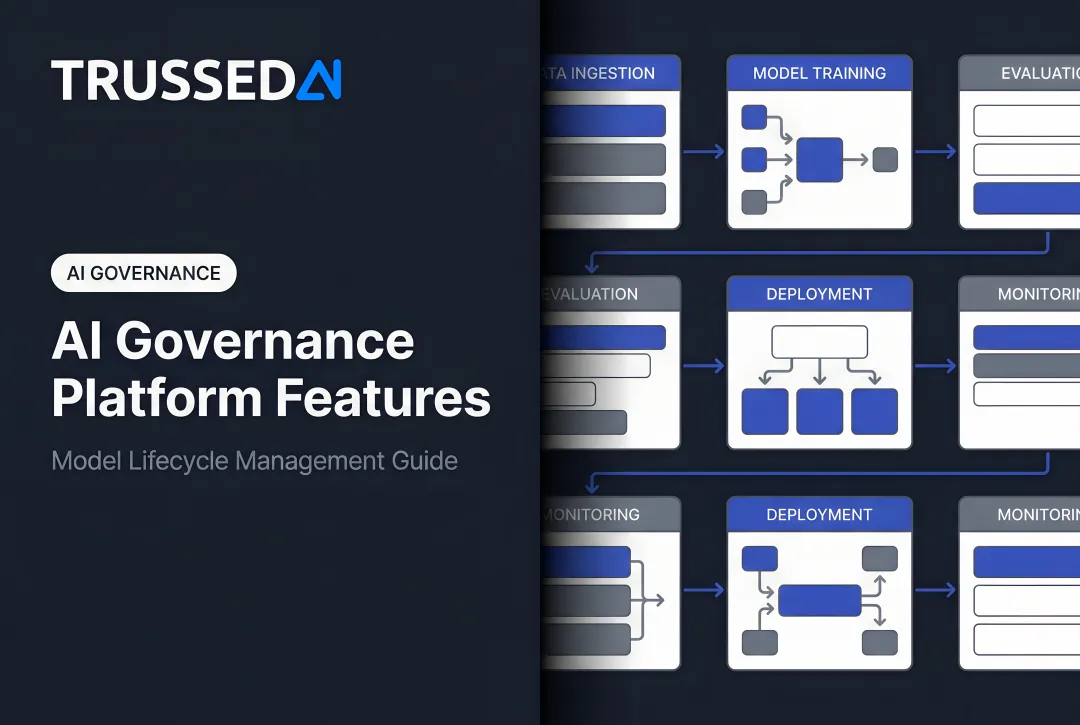

What AI-Powered CLM Adds Beyond Traditional Systems

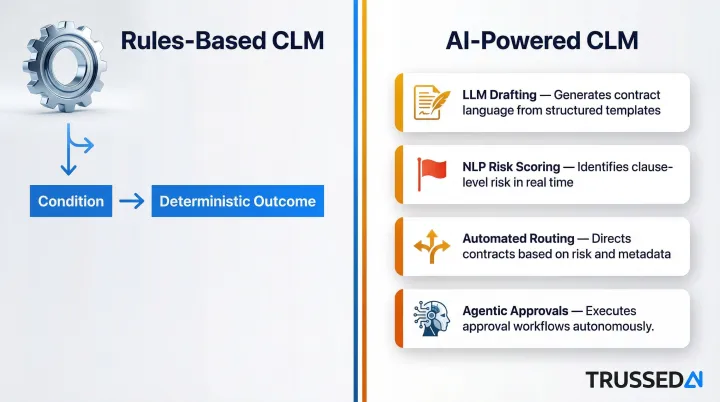

AI-powered CLM introduces several layers that create governance risk:

- LLM-generated drafting recommendations that suggest clause language without citing source templates

- NLP-based clause extraction and risk scoring that flag obligations but can't explain their reasoning

- Automated workflow routing that bypasses human review based on opaque AI decisions

- Agentic AI approvals that take actions across systems without human oversight

Each layer creates a different governance surface than rules-based systems. Traditional CLM operates on explicit logic: if contract value exceeds $100,000, route to VP approval. AI-powered CLM operates on probabilistic models: this contract appears low-risk based on patterns the model learned from training data. When the model makes the wrong call, the consequences are legal, not just technical.

Why Legal Tech Teams Can No Longer Defer AI Governance

Regulatory Pressure Is Mounting

The EU AI Act (Regulation (EU) 2024/1689) entered force on August 1, 2024, with prohibited AI practices and AI literacy obligations applying from February 2, 2025. High-risk AI systems,including those used in legal decision-making,must comply by August 2, 2026.

Key requirements include:

- Article 9: Risk management systems must be established, implemented, documented, and maintained

- Article 12: Automatic recording of events (logs) over the system's lifetime with 6-month retention minimum

- Article 14: Human oversight through appropriate human-machine interface tools

GDPR Article 22 implications add another layer. The European Data Protection Board Opinion 28/2024 notes that AI processing operations may trigger additional controller obligations and data subject safeguards, particularly when automated decision-making affects legal rights.

In the United States, sector-specific mandates are expanding. The NAIC Model Bulletin on AI has been adopted by 24+ states as of March 2025, requiring insurers to document contracts with third-party AI vendors including terms for data security, privacy, sourcing, and regulatory cooperation.

The Black Box Problem

Legal teams face a concrete liability gap: AI outputs,risk scores, clause suggestions, obligation flags,that cannot be explained when challenged by counterparties, auditors, or regulators. A July 2025 Gartner survey of 104 General Counsels found that 36% are urgently focused on adopting AI, building AI skills, or improving AI risk management.

AI risk management has moved from an IT concern to a top-tier strategic imperative for Chief Legal Officers.

The 2025 ContractEval benchmark exposed the "lazy AI" problem: some open-source LLMs exhibit up to a 30% false "no related clause" rate, missing critical risk clauses outright. In contract review, a false negative,missing a critical obligation or risk,carries massive asymmetric consequences compared to a false positive.

The Governance Lag

That explainability deficit compounds a broader structural problem: organizations are deploying AI in CLM workflows faster than they are establishing oversight mechanisms. The 2025 CLOC State of the Industry Report found that many legal departments remain in "emerging to developing" maturity stages, balancing strategic priorities with limited resources. When deployment outpaces oversight, the resulting exposure is not theoretical,it shows up in audit findings, counterparty disputes, and regulatory inquiries that governance frameworks were never built to handle.

The Essential AI Governance Controls Every CLM Platform Needs

These six controls determine whether AI governance in a CLM environment is real or cosmetic. Each one must be enforced at the infrastructure level , documented policies alone don't prevent bad outputs at 2am on a Friday.

Model Access Controls and Authentication

In AI-powered CLM, multiple models may be in play,internal fine-tuned models, third-party LLMs, vendor-supplied AI agents. Each must be governed by role-based access policies that define:

- Who can invoke which model

- Under what conditions

- With what data access permissions

Without this control, any user can inadvertently route sensitive contract data to an unapproved model. Access controls must operate at runtime, evaluating every model invocation request against configured policies before execution proceeds.

Prompt and Output Guardrails

Prompt guardrails prevent the AI from receiving instructions that violate policy,for example, prompts that expose PII or instructions to "ignore confidentiality provisions." Output guardrails catch non-compliant or dangerous responses before they reach users.

In CLM contexts, this includes:

- Guardrails on clause suggestions that contradict standard playbooks

- Filters on risk scores generated without supporting evidence

- PII redaction for sensitive counterparty data

- Cost-limit controls to prevent runaway spending on individual requests

Guardrails must be model-agnostic, applying consistently whether you're using OpenAI, Anthropic, or other providers.

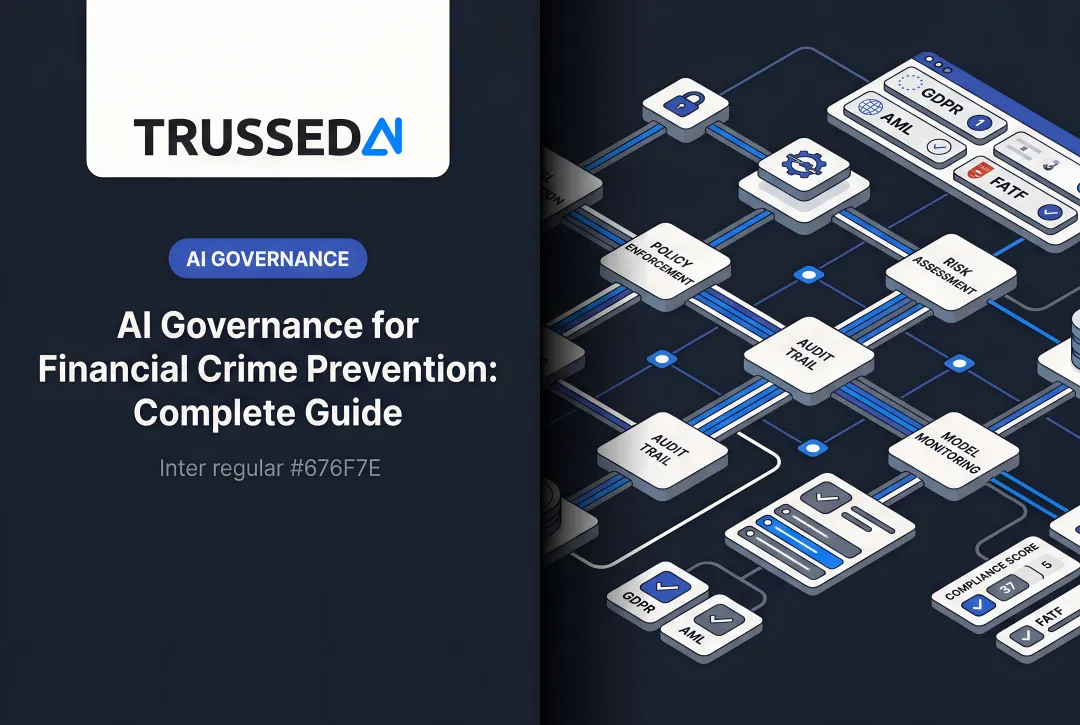

Complete Audit Trails

Audit trails in AI-governed CLM must capture which model was invoked, by whom, with what input, at what time, what output was returned, and whether a human reviewed or overrode it. That's not a checklist to fill out after the fact , it's a record that must generate automatically as a byproduct of every governed AI interaction.

This matters for regulatory review: teams should be able to produce a complete interaction log without any manual reconstruction. That's the difference between governance you can prove and governance you have to argue for.

Human-in-the-Loop Checkpoints

Define trigger conditions for mandatory human review:

- Contracts above a value threshold

- Agreements involving personal data

- Non-standard clauses flagged by AI

- High-risk scoring outputs

- Any AI-generated recommendation that bypasses standard approval routing

The goal is accountability where legal exposure peaks. Enforcing these checkpoints at runtime , not as optional prompts , keeps decisions defensible when a deal goes sideways or a regulator asks questions.

Explainability and Citation

Every AI output in CLM must be traceable to its reasoning:

- A risk score must cite the specific clauses that drove it

- A clause suggestion must reference the template or precedent it draws from

- An obligation flag must link to the source text

Without citation, legal teams have no ground to stand on when a counterparty challenges an AI-assisted position or a regulator requests justification. Traceability is what makes AI output usable in adversarial contexts.

Usage Monitoring and Cost Attribution

Governance means knowing how AI is being used operationally:

- Which teams are invoking AI features

- How frequently

- At what cost per interaction

- Whether usage patterns indicate policy drift

Real-time cost tracking per team, model, and matter type enables both financial oversight and early detection of governance gaps,such as users bypassing governance controls by using unauthorized AI tools outside the governance perimeter.

Runtime vs. Design-Time Governance: The Critical Distinction

Most CLM platforms govern AI at design time: policies written in documents, model selections locked in during configuration, compliance checkboxes ticked at procurement. It's a reasonable starting point. It also fails in production, where AI models behave differently across contract types, user behaviors, and edge cases no one anticipated during setup.

Runtime governance operates differently. It enforces policy at the moment an AI model is invoked , intercepting requests, validating outputs, and generating evidence automatically, without relying on human memory or manual process checks. It's the only form of governance that can prevent a violation from occurring rather than detecting it afterward.

Where Governance Failures Occur

The gap between design-time and runtime approaches is where CLM AI governance failures occur. A platform may be configured correctly at deployment but drift over time as:

- Models are updated by vendors

- New AI agents are added to workflows

- Users find workarounds to bypass controls

Runtime enforcement closes this gap by making governance continuous rather than periodic.

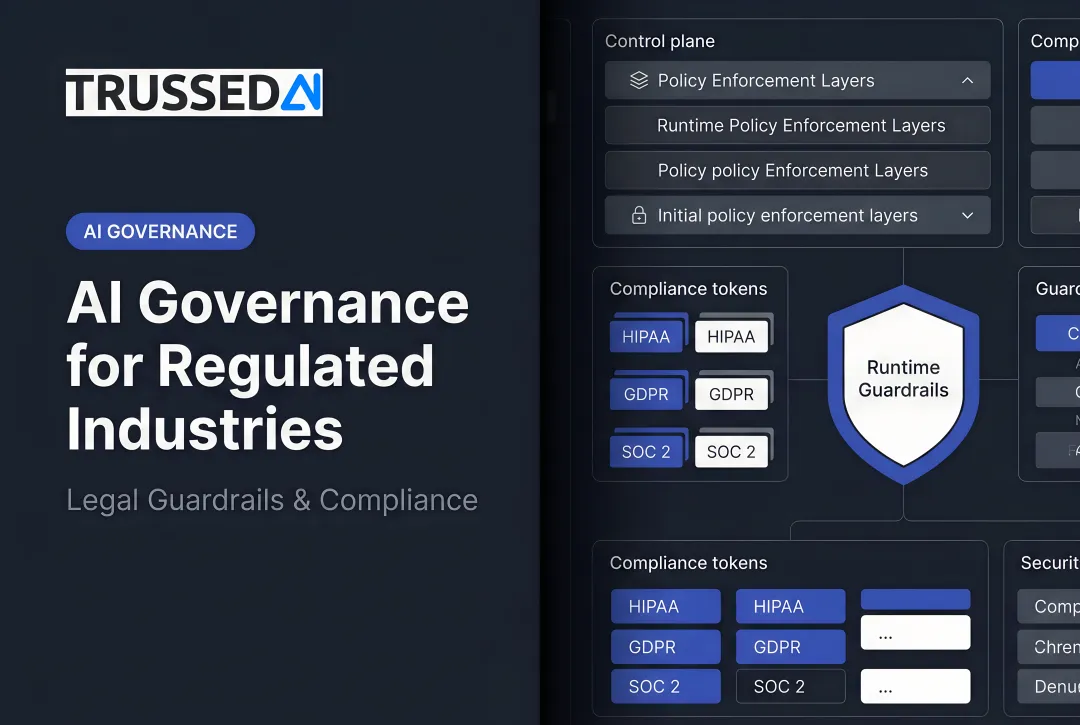

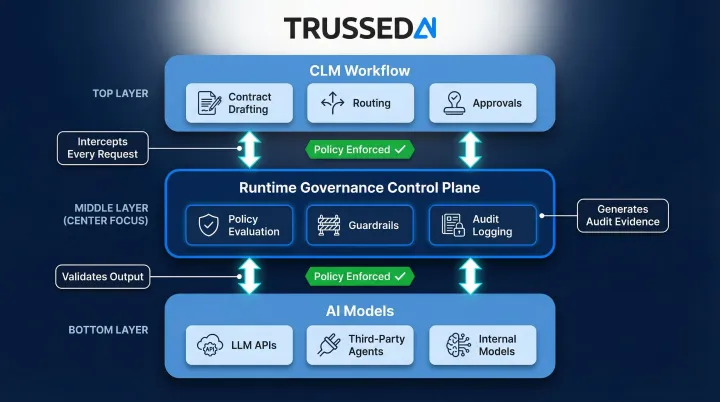

The Infrastructure Layer

Closing that gap requires infrastructure , specifically, a control plane that sits between CLM workflows and the AI models they call, enforcing policies in real time across every interaction. Trussed AI provides this kind of runtime governance layer for enterprises that need to govern AI behavior across CLM and other legal tech environments without rewriting application code.

This control plane operates as a proxy, evaluating policies before every AI interaction completes. For agentic AI systems, this means the following are all authorized against policy before execution:

- Tool calls and API requests

- Workflow triggers and agent handoffs

- Output validation before results surface to users

This prevents unauthorized actions at the execution layer rather than catching them after the fact.

How to Evaluate AI Governance Maturity in a CLM Platform

Go beyond asking "does your platform have AI governance?" The right questions reveal whether governance is structural or cosmetic.

Runtime vs. Configuration-Time Enforcement

Ask vendors how policy enforcement actually works in production:

- Vendors who describe governance as "settings configured during deployment" are offering design-time controls , policies that don't adapt to live conditions

- Vendors who describe "real-time policy evaluation before every AI interaction" are enforcing governance where it matters: at the moment of use

Auditability on Demand

Ask vendors to demonstrate audit trail access for a specific AI-assisted decision. The answer tells you everything:

- "We can generate a report within 2-3 weeks" means audit data isn't captured continuously , it's reconstructed retroactively

- "Every interaction is logged automatically with full context" means the audit trail exists as a byproduct of normal operation, not a separate effort

Explainability and Source Transparency

Ask vendors to walk through how a specific AI recommendation is traced back to its source. Black-box outputs are a compliance liability:

- Risk scores that can't be traced to specific clauses offer no audit value

- Clause suggestions without template references mean the AI is operating without accountability , and your legal team can't verify what it acted on

Organizational Questions to Answer First

Before evaluating platforms, clarify your internal baseline , vendor capabilities only matter relative to your own requirements:

- Who owns AI governance in your legal operations team?

- What regulatory frameworks apply to your industry and jurisdiction?

- What is your risk tolerance for AI-generated recommendations in high-value contracts?

These determine which controls are non-negotiable versus nice-to-have for your specific CLM environment.

Integration Requirements

Governance controls only work if they're enforced across every AI touchpoint in a CLM stack , not just the vendor's own models, but third-party document analysis tools, external LLM APIs, and agentic workflow components. Ask whether governance is platform-native or requires a separate control layer to cover every AI touchpoint in your stack. For mixed environments, a unified control plane is the difference between consistent enforcement and policy gaps that vary by tool.

Frequently Asked Questions

What is the difference between CLM and AI-powered CLM?

Traditional CLM organizes and tracks contracts through defined stages using rules-based workflows. AI-powered CLM adds intelligence layers,NLP for clause extraction, LLMs for drafting, ML for risk scoring,that automate analysis and decision-making but also introduce new governance requirements around explainability, auditability, and oversight.

What are AI governance platforms?

AI governance platforms turn AI policies into enforceable controls,managing model inventories, access rules, output monitoring, and audit evidence generation. They operate as control planes sitting between applications and AI models, enforcing governance at runtime rather than relying on after-the-fact review.

What are the best tools for governing AI models in CLM?

Look for tools that combine CLM-native controls (audit logs, explainability, access settings) with infrastructure-level enforcement that spans the full AI stack. When CLM platforms use multiple models or third-party agents, unified policy enforcement is what closes governance gaps.

What controls should a CLM platform have for AI governance?

The six essential controls are model access controls, prompt and output guardrails, complete audit trails, human-in-the-loop checkpoints, explainability with citation, and usage monitoring. All six require runtime enforcement to be effective. Design-time configuration alone creates governance gaps when systems drift or users find workarounds.

How does AI governance in CLM support regulatory compliance?

Governance controls,particularly audit trails, explainability, and access management,produce the documentation required by regulations like the EU AI Act and GDPR. When every AI interaction is governed and logged, compliance evidence is generated automatically rather than reconstructed manually after the fact.